How Photon Ring achieves near-hardware latency

Photon Ring is a zero-allocation pub/sub crate for Rust built around pre-allocated ring buffers with per-slot seqlock stamps. It targets the part of concurrent systems where queueing overhead dominates: market data, telemetry fanout, staged pipelines, and other hot-path broadcast workloads where every subscriber must observe every message.

The central insight is stamp-in-slot co-location:

by embedding the seqlock sequence stamp directly alongside the payload in one

#[repr(C, align(64))] struct, both ownership metadata and data reside

within a single 64-byte cache line for payloads up to 56 bytes. Consumers validate

and read in one L3 snoop instead of two, cutting coherence traffic in half.

64 bytes (one cache line)

+-----------------------------------------------------------+

| stamp: AtomicU64 | value: T |

| (seqlock) | (Pod — all bit patterns valid) |

+-----------------------------------------------------------+

For T <= 56 bytes: stamp and value share one cache line.

Larger T spills to additional lines (still correct, slightly slower).

Write protocol: Read protocol:

stamp = seq*2 + 1 (odd) s1 = stamp.load(Acquire)

fence(Release) if odd → spin

memcpy(slot.value, data) if s1 < expected → Empty

stamp = seq*2 + 2 (even) if s1 > expected → Lagged

cursor = seq (Release) value = memcpy(slot)

s2 = stamp.load(Acquire)

if s1 == s2 → return value

else → retry

SubscriberGroup batches N logical consumers into a

single seqlock read, cutting that to 0.2 ns each.

publish and

try_recv never touch the allocator —

no GC pauses, no malloc jitter.

T: Pod (every bit pattern valid), making

speculative torn reads safe to discard. Compile-time proof,

not a runtime check.

Publisher is single-producer via &mut self

(no CAS on write). MpPublisher adds a lock-free multi-producer

path. Named-topic Photon<T> and heterogeneous

TypedBus included.

alloc. Pipeline topology builder, hugepages, and CPU

affinity are available on supported desktop/server platforms.

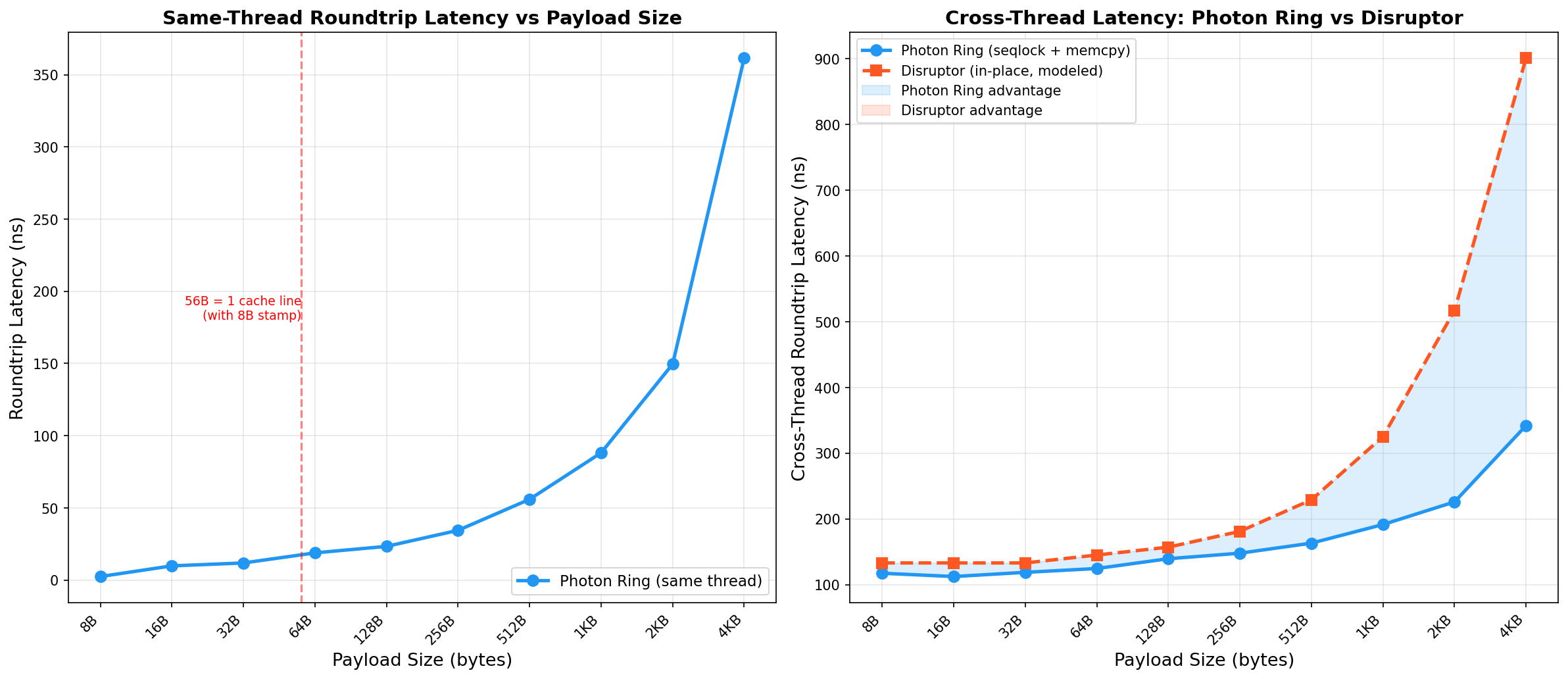

Criterion (100 samples, --release, no custom RUSTFLAGS) on two machines. Numbers are medians unless stated.

Compared against disruptor v4.0.0 (BusySpin wait, 4096-slot ring, same binary, same Criterion invocation).

| Operation | Photon Ring (A) | Photon Ring (B) | disruptor-rs (A) | disruptor-rs (B) |

|---|---|---|---|---|

| Publish only | 2.8 ns | 2.4 ns | 30.6 ns | 15.3 ns |

| Cross-thread roundtrip | 95 ns | 130 ns | 138 ns | 186 ns |

| Same-thread roundtrip (1 sub) | 2.7 ns | 8.8 ns | — | — |

| Fanout (10 subscribers) | 17.0 ns | 27.7 ns | — | — |

| SubscriberGroup read | 2.6 ns | 8.8 ns | — | — |

| MPMC (1 pub + 1 sub) | 12.1 ns | 10.6 ns | — | — |

| Empty poll | 0.85 ns | 1.1 ns | — | — |

| Batch publish 64 + drain | 158 ns | 282 ns | — | — |

| Struct roundtrip (24-byte Pod) | 4.8 ns | 9.3 ns | — | — |

| One-way latency p50 (RDTSC) | 48 ns | — | — | — |

| Sustained throughput | ~300M msg/s | ~88M msg/s | — | — |

Sustained message rate, single publisher, single subscriber, BusySpin.

| Machine | Throughput | Notes |

|---|---|---|

| Intel i7-10700KF (Intel i7-10700KF) | ~300M msg/s | BusySpin, 4096 slots, u64 payload |

| Apple M1 Pro (Apple M1 Pro) | ~88M msg/s | BusySpin, 4096 slots, u64 payload |

Photon Ring outperforms disruptor-rs at every payload size tested (8 B – 4 KiB). See full payload scaling analysis.

T: Pod payloads and no custom RUSTFLAGS.

CPU governor, Turbo Boost, SMT, and core pinning are not controlled in the Criterion suite.

Run cargo bench on your own hardware and treat published figures as indicative snapshots.

How Photon Ring fits alongside the broader Rust concurrency ecosystem

| Feature | Photon Ring | disruptor-rs v4 | crossbeam-channel | bus |

|---|---|---|---|---|

| Delivery model | Broadcast | Broadcast | Point-to-point queue | Broadcast |

| Publish cost | 2.8 ns / 12.1 ns (MPMC) | 30.6 ns | — | — |

| Cross-thread roundtrip | 95 ns | 138 ns | — | — |

| Sustained throughput | ~300M msg/s | — | — | — |

| Topology / pipeline builder | Yes | Yes | No | No |

| Batch publish & drain | Yes | Yes | Iterator only | No |

| Named-topic bus | Yes | No | No | No |

| Heterogeneous-type bus | Yes (TypedBus) | No | No | No |

| Backpressure | Optional | Default | Default | Default |

| no_std compatible | Yes | No | No | No |

| Multi-producer (MPMC) | Yes | Yes | Yes | No |

| CPU affinity helpers | Yes | No | No | No |

| Hugepages / mlock | Linux only | No | No | No |

| Lazy drop on overflow | Yes (lossy default) | Blocks | Blocks or drops | Blocks |

| Constraint | Rationale |

|---|---|

| T: Pod | Every bit pattern must be valid. Torn reads from speculative seqlock copies are safe to reject without UB. |

| Power-of-two capacity | Indexing uses seq & mask instead of %, avoiding division on the hot path. |

| Single producer by default | &mut self enforces one writer at the type level. No CAS on the write path. |

| Lossy overflow by default | Publisher never blocks. Slow subscribers detect drops via TryRecvError::Lagged. |

| 64-bit atomics required | The seqlock stamp is a u64. Platforms without atomic 64-bit operations are not supported. |

Channels, buses, pipelines, and wait strategies — all composable. See docs.rs for the full reference.

// Single producer, multiple consumers — the fastest path let (mut pub_, subs) = channel::<u64>(1024); let mut sub = subs.subscribe(); pub_.publish(42); assert_eq!(sub.try_recv(), Ok(42)); // Bounded backpressure (publisher blocks instead of overwriting) let (mut pub_, subs) = channel_bounded::<u64>(1024, 512); // Multiple producers let (mp_pub, subs) = channel_mpmc::<u64>(1024); let mp_pub2 = mp_pub.clone(); // MpPublisher: Clone + Send + Sync

let bus = Photon::<u64>::new(1024); let mut prices = bus.publisher("prices"); let mut trades = bus.publisher("trades"); let mut sub = bus.subscribe("prices"); prices.publish(100); assert_eq!(sub.try_recv(), Ok(100));

let (input, pipeline) = Pipeline::builder() .capacity(4096) .input::<u64>() .then(|x| x * 2) // stage 1: dedicated thread .then(|x| x + 1) // stage 2: dedicated thread .build(); input.publish(21); // Fan-out: diamond topology let (input, _pipeline) = Pipeline::builder() .capacity(1024) .input::<u64>() .fan_out(|x| x * 2, |x| x + 100) // two parallel branches .build();

use photon_ring::WaitStrategy; // Absolute lowest wakeup latency sub.recv_with(WaitStrategy::BusySpin); // Cooperative spinning (yields CPU between spins) sub.recv_with(WaitStrategy::YieldSpin); // Exponential backoff (good for mixed loads) sub.recv_with(WaitStrategy::BackoffSpin); // Automatically tunes based on observed latency sub.recv_with(WaitStrategy::Adaptive);

| Platform | Core ring | Affinity | Topology | Hugepages |

|---|---|---|---|---|

| x86_64 Linux | Yes | Yes | Yes | Yes |

| x86_64 macOS / Windows | Yes | Yes | Yes | No |

| aarch64 Linux | Yes | Yes | Yes | Yes |

| aarch64 macOS (Apple Silicon) | Yes | Yes | Yes | No |

| wasm32 | Yes | No | No | No |

| FreeBSD / NetBSD / Android | Yes | Yes | Yes | No |

| 32-bit ARM (Cortex-M) | No | No | No | No |

From zero to a working channel in under a minute

[dependencies] photon-ring = "2" # Optional features # photon-ring = { version = "2", features = ["derive", "hugepages"] }

use photon_ring::{channel, Photon}; fn main() { // SPMC: one publisher, multiple independent subscribers let (mut pub_, subs) = channel::<u64>(1024); let mut sub_a = subs.subscribe(); let mut sub_b = subs.subscribe(); pub_.publish(42); // Both subscribers see the same message assert_eq!(sub_a.try_recv(), Ok(42)); assert_eq!(sub_b.try_recv(), Ok(42)); // Named-topic bus let bus = Photon::<u64>::new(1024); let mut p = bus.publisher("prices"); let mut s = bus.subscribe("prices"); p.publish(100); assert_eq!(s.try_recv(), Ok(100)); }

use photon_ring::{channel, WaitStrategy}; use std::thread; let (mut pub_, subs) = channel::<u64>(4096); let mut sub = subs.subscribe(); let consumer = thread::spawn(move || { loop { match sub.try_recv() { Ok(v) => { /* process v */ } Err(_) => break, } } }); for i in 0..1_000_000 { pub_.publish(i); } consumer.join().unwrap();